Every institution that has lived with a learning platform for more than a decade knows the conversation. A renewal date appears on the calendar, the procurement team asks the obvious question, and the answer comes back almost before anyone has finished asking it: we should stay where we are. Switching is disruptive. The team knows the system. The integrations are in place. The community is trained. We have other priorities.

All of that is true. None of it is sufficient.

The argument I want to make here is not that universities and large institutions should leave their incumbent learning platform. It is that they should stop renewing without doing the work to find out whether they should. The cost of switching is highly visible and easy to overstate. The cost of staying is distributed, partially hidden, and very easy to ignore. A serious decision requires both sides of the ledger.

This piece sets out how to think about that ledger. It is written for the people inside institutions who already suspect their platform is no longer earning its place but find themselves stuck in a cycle of deferred decisions and quiet annual renewals. The intent is to give you the language to put the question on the table properly, not to push you towards any particular outcome.

Why inertia tends to win

Incumbent platform vendors hold a structural advantage that has very little to do with whether their product is the right fit. Switching a learning platform is genuinely hard. Data migration, content re-authoring, retraining, the loss of historical reporting, the management of academic anxiety during the transition: all of this is real, and all of it raises the activation energy required to leave. Vendors know this, and the more sophisticated ones price it in. The result is that institutions routinely renew not because the platform is serving them well, but because switching feels worse than not switching.

This dynamic is most visible in UK higher education, where the Moodle and Blackboard installed bases reflect adoption decisions made fifteen or twenty years ago. Sector analyses confirm that many of these implementations date back two decades or more. The interesting question is not how those decisions were made then, but why they continue to hold now, in a market that has moved substantially since.

Part of the answer is sector inertia, which is well documented. The more useful answer is that institutions tend to default to the incumbent for four specific reasons, each of which is rational in isolation and misleading in aggregate.

- The strategic decision becomes captive to past investment. Years of work go into making any incumbent platform fit local needs. Custom integrations, plugins, theming, internal training material, support documentation, specialist staff who know the system inside out. This investment is real, and the natural instinct is to protect it. Over time, though, the conversation shifts from "how do we get the most out of this platform" to "how do we keep this platform viable", and the next platform decision starts to be shaped less by what the institution needs in the decade ahead than by what would make the previous decade's effort look wasted. The sunk cost is real. The sunk cost fallacy is what happens when you let it dictate forward strategy.

- Fairness gets translated into "everyone stays put." A defensible instinct, particularly at executive level, is that no learner cohort should get a meaningfully better digital experience than another. This is correct. The trap is when fairness is interpreted as freezing the entire institution at the level of the incumbent platform indefinitely. Equity over time is achieved through planned convergence on a higher standard, not through permanent uniformity at the current one. A controlled, phased adoption that starts where impact is highest, with an explicit roadmap to consistency, is a more defensible position than a freeze that locks every cohort to the lowest common denominator.

- Process becomes a substitute for progress. Universities and large institutions are designed to move carefully, and rightly so. Academic continuity matters, stakeholder confidence matters, and rushed platform decisions are bad platform decisions. But extended evaluation can quietly become a holding pattern. Reviews get scoped, paused, scoped again, and meanwhile the underlying environment continues to accumulate complexity. New integrations land. Workarounds harden into workflows. Specialist knowledge concentrates in fewer people. The platform becomes harder to change with every passing year, which makes the next review feel even more daunting, which extends the cycle. Caution that produces no decision is not caution. It is deferral with a better name.

- "Cheaper" gets confused with "lower cost." Self-hosted and heavily customised platforms tend to look financially attractive when the most visible line item is small. The licence cost for Moodle is the canonical example: zero. But the actual spend sits elsewhere, distributed across infrastructure, hosting, security and patching, upgrade testing, capacity planning, specialist staffing, and the day-to-day operational work of keeping the environment healthy. When institutions actually run a comprehensive TCO comparison, the gap between visible cost and total cost is often the single most surprising finding.

Reframing the question

The decision is usually framed as stability versus change. That framing favours the incumbent automatically, because change always sounds riskier than not changing. A more useful framing is this: are we investing in sustaining what we have, or in enabling what we need next?

Both can be legitimate. Sustaining strategies make sense when the current platform is genuinely fit for the next decade and just needs continued maintenance. Enabling strategies make sense when the current platform has structural limitations that no amount of further investment will fix, and where the institution's ambitions sit beyond what the existing system can support.

"The status quo is never free. Every year you stay with a system that isn't working, you're paying for it — in staff time, in lost learner engagement, and in capabilities you simply can't access."

The honest test is whether the current platform is being maintained because it is the right environment for the institution's next decade, or because it has become administratively safer to keep maintaining it. These are not the same thing, and the answer matters.

What you cannot see in the renewal quote

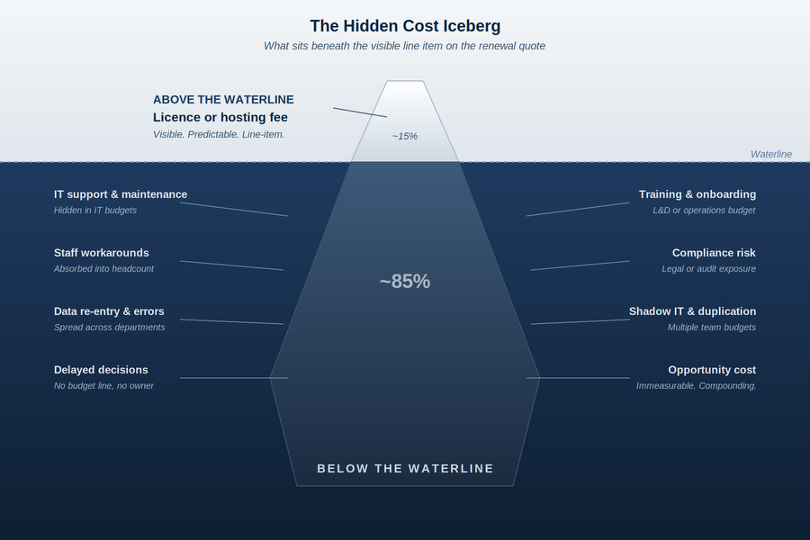

The most useful diagnostic image when discussing legacy platform costs is the iceberg. The visible cost above the waterline is the licence or hosting fee. Below the waterline sits the bulk of the actual spend, distributed across teams that may not even share a budget.

What sits below the line typically includes IT support and maintenance hours absorbed into general infrastructure budgets. Staff workarounds that have become invisible because they are now just "how we do things": the spreadsheet that compensates for a reporting limitation, the manual re-enrolment that compensates for blunt automation rules, the export-to-third-party-tool that compensates for a poor authoring environment. Training and onboarding overhead that scales with staff turnover. Compliance and audit exposure where the platform's logging or reporting is just barely sufficient. Shadow systems that have grown up around platform gaps, often paid for out of departmental budgets. Data re-entry between systems that should integrate but do not. Opportunity cost from capabilities the platform does not support, which by definition does not appear on any invoice.

None of these is dramatic in isolation. In aggregate they routinely exceed the visible licence or hosting fee, often by a significant multiple. A serious platform review is, more than anything else, an exercise in dragging these costs above the waterline so that the renewal decision is made with all of them in view.

The cost of action versus the cost of inaction

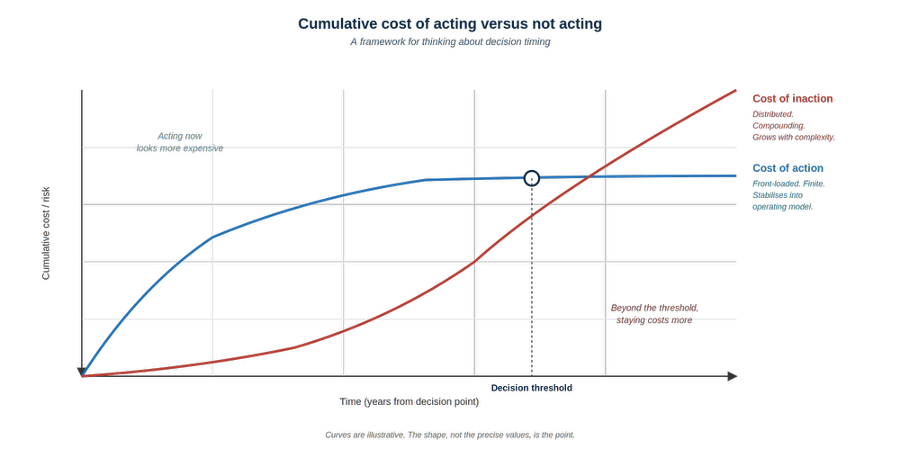

The other diagnostic image worth having in mind is the relationship between the cost of acting and the cost of not acting, plotted over time.

Cost of action is front-loaded, defined, and time-bound. Migration, training, change management, content re-authoring, parallel running. It is finite, and it stabilises into a predictable operating model once the transition is complete.

Cost of inaction is the opposite. It is initially low, and it compounds. Each year a heavily customised platform stays in place, more integrations land on top of it, more dependencies build up, more specialist knowledge becomes concentrated in fewer staff, and the eventual cost of changing increases rather than decreases. Defer the decision long enough and the curves cross. Beyond that point, staying is more expensive and higher risk than acting, even though it still feels safer in the moment.

This is the part that most renewal conversations get wrong. They treat the cost of action as immediate and concrete, and the cost of inaction as theoretical. Both are real. The first is just easier to put in a spreadsheet.

For institutions running self-hosted or heavily customised platforms, this threshold is often closer than it appears, and in some cases has already passed. The signal is not usually a dramatic failure. It is a slow accumulation of friction: the upgrade that takes longer than it used to, the integration that breaks more often, the workaround that becomes load-bearing, the senior engineer whose departure would be disproportionately painful.

A workable TCO framework

If you are genuinely trying to put the renewal decision on a proper footing, the framework below covers the categories that need to be in scope. Apply it to the status quo and to any alternative being evaluated. The point is not precision to the pound; it is to make sure that all the categories appear in both columns of the comparison.

Licence and hosting cost: what you pay the vendor or the hosting provider directly.

Integration cost: bespoke development to connect the platform to other institutional systems, plus the ongoing maintenance of those integrations.

Administration time: hours per week spent managing, configuring, and working around the platform, costed at fully loaded staff rates.

Content development overhead: additional tools, time, or third-party services required because of platform limitations.

Support and training: ongoing cost to keep staff competent on the platform, including replacement cost for specialist roles.

Security and compliance overhead: patching cycles, vulnerability management, audit preparation, and the staff time to maintain them.

Opportunity cost: the value of capabilities the platform does not currently provide, weighted by how much they matter to the institution's strategy. This is the hardest column to populate, and the most likely to change the result.

Switching cost: the genuine cost of migration, modelled honestly. This belongs in the alternative's column, but so does every saving from eliminating the workarounds, integrations, and overheads in the current setup.

When the comparison is done properly, the switching cost is often paid back within the first contract period, sometimes earlier. When it is not, that is a useful answer too: it tells you the incumbent is genuinely the right platform and the renewal can be signed with confidence rather than resignation.

The questions worth asking at renewal

If you are heading into a renewal conversation with your incumbent vendor, there is a short list of questions that usefully reset the dynamic. They are not designed to be hostile. They are designed to surface information that the standard renewal conversation tends to skip.

What percentage of customers on our version of the platform have renewed in the last three years? Retention rates tell you something the sales deck will not.

What does our actual usage data show? Active user percentages, completion rates, feature adoption. Low adoption often reveals a platform that is being paid for but not used.

Which features on the roadmap you showed us eighteen months ago have actually been delivered? Roadmap promises are easy. Delivery is the evidence.

What would it cost us to leave, in detail and in writing? Data portability and migration support should be explicit, not assumed.

What is included in our current contract that we are not using? Many institutions are paying for capability they have never been enabled on.

One further question is worth singling out, because it gets at a structural problem that the others do not. A meaningful proportion of institutional risk paralysis around platform change is engineered, not inherent. The IMS Global Common Cartridge standard for resource export has existed since 2008 and is the recognised mechanism for portable content between learning platforms. Despite this, several incumbent platforms ship export workflows that are partial, multi-step, or quietly omit the elements that would make migration tractable, particularly assessments, question banks, and gradebook structure. The result is that the apparent cost of leaving is inflated by design. Asking the question directly, with reference to the standard, surfaces this:

"Can you demonstrate full IMS Common Cartridge export of our content, including assessments, question banks, and gradebook structure, in a single workflow? If not, which elements require manual reconstruction, and what is the documented hour cost per course?"

Vendors certified against the standard should be able to produce a working export on request. Where they cannot, the gap belongs in the switching-cost model, not in your assumptions about what migration would involve.

The simple act of asking these questions tends to change the renewal dynamic, even if you ultimately stay. Vendors that know switching is painful and that you have not seriously considered alternatives tend to negotiate accordingly. Vendors that know you have looked elsewhere, even if you did not move, negotiate differently.

Where Canvas fits in this picture

It would be incomplete to discuss this without acknowledging the platforms most institutions evaluate. The UK and global market data is reasonably consistent on this point. Canvas is used by nearly a third of Russell Group universities, and sector analyses consistently identify a core group of four products dominating global higher education: Moodle, Blackboard (Anthology), Canvas (Instructure), and Brightspace (D2L). Within that group, the direction of movement has been clear for several years, with Canvas and Brightspace gaining share at the expense of the older incumbents.

What Canvas offers that is materially different is not a longer feature list. The market analyses that look closely at functional comparison tend to conclude that the major LMS platforms are about 90% similar in core capability, with each having a distinguishing 10%. The meaningful difference is in operating model. A cloud-native SaaS platform shifts infrastructure, security, scaling, upgrades, and continuous improvement out of the institution's responsibility and into a predictable service framework. That is a different value proposition from "the same job, done slightly differently". It is a re-allocation of where institutional effort gets spent.

Whether that re-allocation is right for any specific institution depends on the TCO comparison, the strategic ambitions, the appetite for change, and the maturity of the existing setup. Canvas is not always the answer. But for institutions whose internal effort is currently absorbed in keeping the platform alive rather than improving teaching and learning on top of it, the case is increasingly hard to argue against on the merits.

Closing

A decision made by inertia is still a decision. It is just one that did not get made deliberately. For something as central to institutional life as the learning platform, that is not a defensible way to operate. Either the incumbent is genuinely the right platform for the next decade, in which case a proper review will confirm it and the renewal can be signed with confidence, or it is not, in which case the cost of finding out sooner rather than later is almost always lower than the cost of finding out later.

The work of putting this question on the table properly is not glamorous. It involves surfacing costs that nobody currently owns, building TCO models for things that have never been costed, and asking vendors questions they would prefer not to answer. But it is the work that turns the renewal conversation from a default into a decision, and that distinction matters more than the eventual outcome.

If you are working through a platform review and want to talk through the approach, you can find me on LinkedIn.